Introduction: The photos in this post were all taken by my wife, Ashala, on 70mm Ektachrome 64 film with a Hasselblad ELM camera. Those photos were stored away in my “archive” in a binder from 1981 until recently when I began the process of digitizing my film. I “found” these photos last week when digitizing them. To learn more about my digitizing project, please click here.

I may have mentioned in these blogs that I am a commercial pilot.

According to my pilot’s certificate, I am limited to flying lighter-than-air craft with an airborne heater (hot-air balloons). I received my pilot’s license in 1974 after training under the famous pilot Deke Sonnichsen of Menlo Park, California.

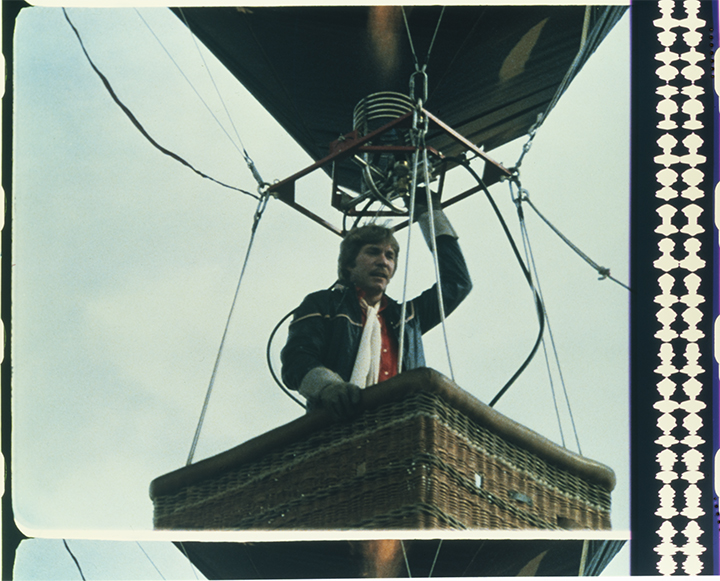

I am the licensed pilot. In the basket is actor Christopher Stone, who was “piloting” the balloon in the commercial. Judging from the tether line attached to the basket and the angle of the balloon, I was being pulled upwind by my ground crew in this image. On the ground are the camera crew, sound recordist, director, et al.

I have owned and operated a number of balloons, and have had the privilege of flying in the U.S., Mexico, Germany, France, the Netherlands, and Russia (the subject of another blog soon). I have over 500 hours as pilot-in-command, and have enjoyed many years of flying in beautiful locations with wonderful passengers.

I retired as a pilot a few years ago when I decided that there are old pilots, and bold pilots, but very few old, bold pilots. I had about 45 years of experience and it was time to become a balloon ground crew member.

From 1979 through 1984 I was Editor in Chief of Ballooning magazine, an international journal for balloonists. We had thousands of subscribers in many countries. In that role I traveled the U.S. and Europe, photographing and participating in ballooning events. It was a colorful and exciting experience. We received an Award of Merit from Communication Arts Magazine for Ballooning; I count that as one of my greatest design and publishing achievements.

In 1981 I was contacted by a Hollywood producer about flying a balloon for a television commercial for Lowenbrau beer. I would pilot the balloon while a helicopter flew a camera adjacent to me, and that footage would be the start of the commercial that ended with the well-known “Here’s to good friends…” song, and a group of balloonists and their pilot in an aprés-flight toast. Simple, right?

The balloon would be “piloted” in the aerial scenes by an actor, Christopher Stone (The Howling) who would act as the pilot, while I was hidden below him in the basket, actually operating the balloon, while looking out the side of the wicker basket through a two-inch hole where a leather strap passes to hold the propane tanks in place (you can see the small holes in the photo above). Simple, right?

Mr. Stone was a seasoned actor, and a handsome guy who fit the role perfectly. He had a single line to deliver on-camera from the balloon basket. He shouted to his crew on the ground: “I’ll be landing this thing in about five minutes, and I expect a suitable reception!!” Simple, right?

The problem was that he blew his line about a dozen times. He just couldn’t get it right.

And, the simple problem of re-taking a shot like that was that the balloon, weighing several tons (including the hot air inside), had to be pulled back upwind to start each new take. I had a small crew of helpers on the ground to do this. They would all get on a ground line and pull the balloon upwind several hundred yards, while I kept it buoyant. After about ten of those upwind hikes, my crew was ready to mutiny.

The helicopter, a Bell 206 Jet Ranger, hovered nearby. Onboard was Academy Award-winning cinematographer Conrad Hall (Butch Cassidy and the Sundance Kid), using a Panavision camera shooting 35mm film on a Tyler vibration-canceling camera mount. The helicopter pilot was Rick Holley.

Actor Christopher Stone was overheard later saying that flying a hot-air balloon is easy.

Flying one while crouched below the rim of the basket, and operating the burner by turning the liquid propane valve on one of the cylinders while looking out of a two-inch hole was not very easy. Normal operation is with a blast valve on the burner frame. Operating it with eight feet of hose between the tank valve and the burner introduced considerable latency to the process. I had to anticipate more, and stop sooner. I also had a vent line, a small rope that opens a vent in the balloon to let hot air out, and allow the balloon to descend. Those are the only controls in a hot-air balloon.

The filming went well, and at the end of the second day we had all the shots needed to complete the project. I went back being the editor of Ballooning magazine, and all the production people went on to make more Lowenbrau commercials.

I have scoured the Web, and have not been able to find the commercial we made. There are many “Here’s to good friends…” commercials out there, but this one didn’t make the cut, so to speak, for inclusion in the historical record.

I was overheard later saying that acting is easy.